KEY INFORMATION

- I led a project to explore whether it’s possible to use large language models (LLMs) to give tone guidance in customer support.

- The experiments focused on tone detection and enforcement.

- Fast turnaround (3 days).

- This is a dipstick set of tests, not a rigorous study, owing to the timeframe.

- The results were promising, both from the 175B parameter model used, and from the 70B model.

- Both could consistently differentiate between professional and unprofessional language.

- Both could also use lists of rules to give feedback on acceptable and unacceptable agent utterances.

- The models’ attempts to identify differences in tone in a more nuanced way (e.g. eager vs helpful) was less consistent, perhaps reflecting the subjectivity in interpreting tone and/or the complexity of the task.

- The project won an award (“Creative Trailblazer”) as part of a LLM sprint.

THE PROBLEM

Have you ever spoken to a customer service agent who has really got on your nerves?

Perhaps, they are too informal and chatty when you'd prefer something more transactional. Or perhaps you are from a culture where you are accustomed to informality and friendliness.

Maybe you just encountered someone who seemed rude or hostile?

It can be hard to control the quality of customer support interactions. Companies want to give agents freedom to be human and do their job with empathy. But sometimes personal or cultural differences lead to messaging that is off-brand or tonally wrong.

Global outsourcing of customer support means customers could be chatting with someone with a very different perception of tone. What might seem empathetic in one culture can be jarring in another.

Even with extensive training, agents are human and make mistakes. What if we could help them by offering in-product guidance to pick up potentially annoying messaging and offer guidance to them before they hit the send button?

Could large language models power this? Are they sophisticated enough to pick up nuances in tone or breaches of quality and then rephrase messages accordingly?

That's what this set of experiments aimed to find out.

THE PROCESS

Compile

Label

Experiment

Iterate

COMPILE

The first step was to compile a set of real agent utterances with a broad range of messages to test various tones.

Standards across different customer service teams vary considerably, so the collection covered a wide spectrum of utterances.

The ones that needed guidance fell broadly into three categories: red flags, lack of empathy and wrong tone.

Red flags

Exposing legal risk

Sexist

Flattering

Inappropriate emojis

Lack of empathy

Apportioning blame

Hostile

Uncaring

Judgemental

Wrong tone

Using jargon

Too colloquial

Flowery language

Over-familiar

Over-enthusiastic

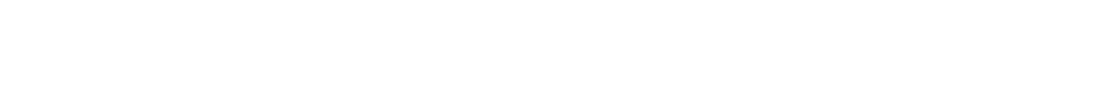

LABEL

The 50-60 utterances were labelled to show what was wrong and then offered a corrected version, to use in 4 prompt experiments.

THE 4 EXPERIMENTS

The experiments used different styles of prompt (around 50-100 prompt variations and regenerations per experiment) in two LLMs: one 70B parameter model and one 175B parameter model.

LIST OF RULES

Give the model a list of rules to follow, and then a number of good or bad examples of utterances to analyse and rewrite.

PERSONA TEST

Tell the model it was an agent with a persona and then ask it to rewrite examples of utterances

TONE IDENTIFICATION

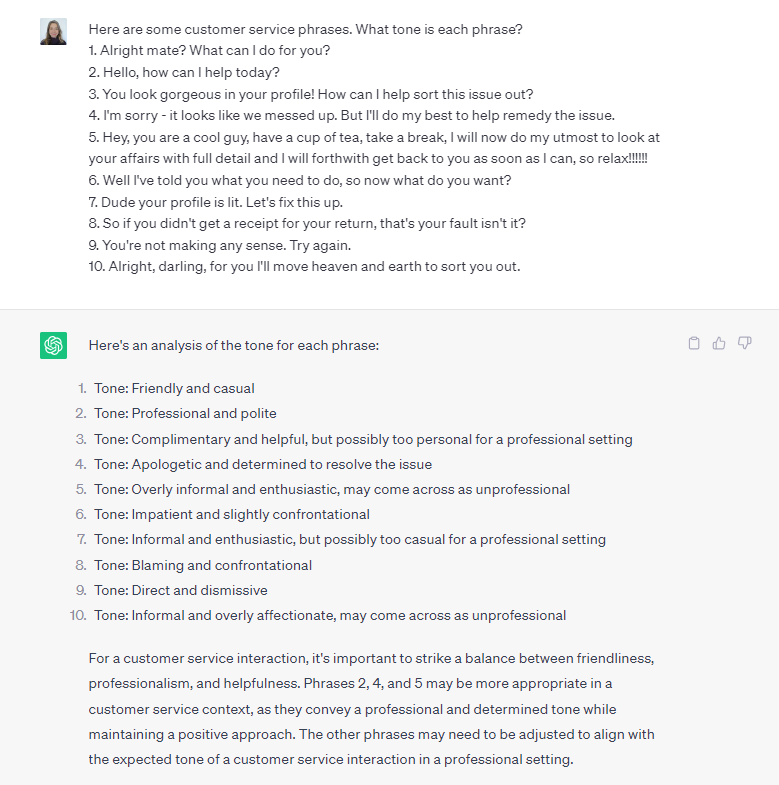

List out some utterances in the prompt and ask the model to identify the tone.

LINGUISTIC INSTRUCTION

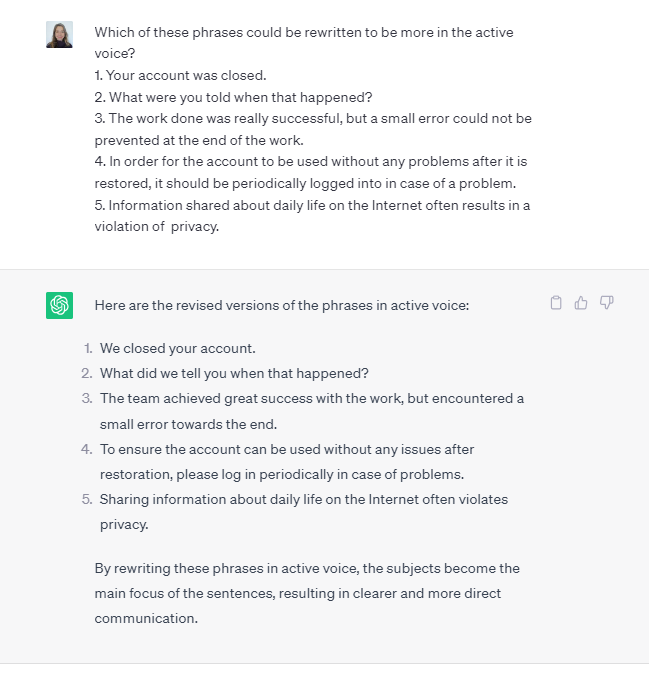

Can the model perform simple content changes like changing phrases from passive to active voice?

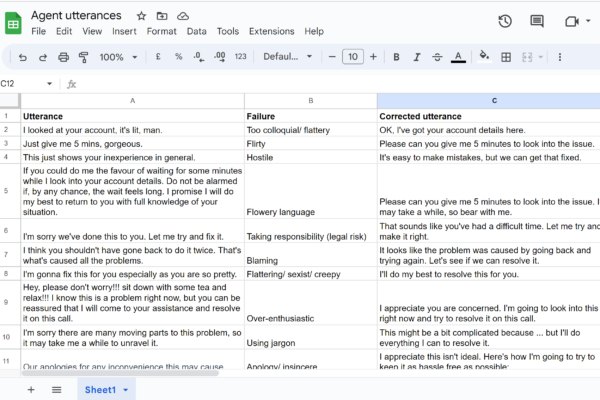

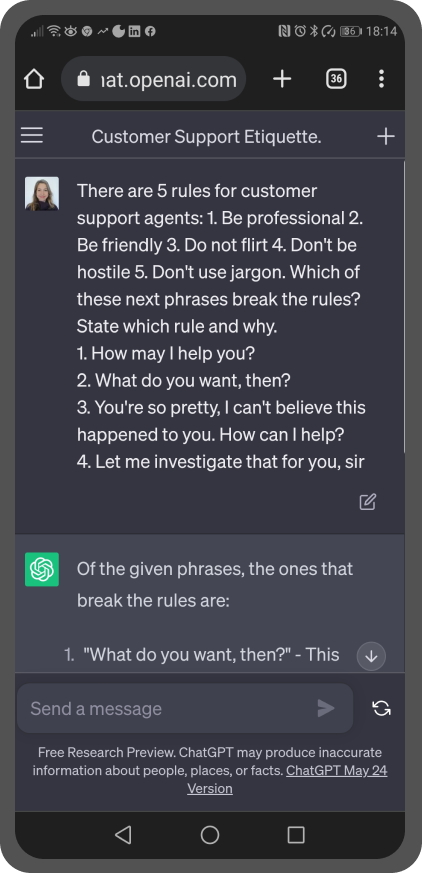

EXPERIMENT 1: LIST OF RULES

I tried various prompts with variations on the following structure:

Here is a list of 5-10 content guidance rules {list of rules}; here is a list of agent utterances {list of utterances}; identify which ones have broken the rules and why, and rewrite them.

Both models could usually detect when a phrase wasn't right. However, they didn't always correctly identify exactly what rules had been broken.

Rewrites were, more often than not, an improvement on the original version.

The most successful prompts specified the tone of voice desired e.g. "friendly but professional" or specified that these were rules for "customer service". When either of these were specified in the prompt, the responses given were usually close to the mark, with acceptable rewrites even if the models' reason for the original being wrong was off the mark.

One of the surprises from this experiment for me, was that the models could handle quite complex, multi-step instructions.

Another surprise was that the 70B model struggled with more black and white rules. An example of one that I tested was "never say sorry or apologise". The model failed on multiple occasions to get this right, either failing to identify the apologetic agent, or going too far in the other direction and identifying this as the rule that was broken every time, even when it wasn't.

EXPERIMENT 2: PERSONA TEST

For this prompt experiment, the models were given a personality type and asked to rewrite phrases that were tonally wrong.

You are a customer service agent for {brand + characteristics}. Rewrite the following phrases {list of phrases}.

I experimented with various personae, including "a hip technology company employee" and named various brands, (Apple, BT, gov.uk for example) and characteristics (including "hip", "trendy", "progressive" and "polite").

Interestingly, this form of prompt tended to result in high quality rewritten phrases, particularly for the 70B model, despite being much less prescriptive.

I was interested in getting to an informal but professional voice. The most effective persona for this was the 'hip technology company customer support agent'.

EXPERIMENT 3: TONE IDENTIFICATION EXERCISE

For this prompt listed out varying numbers of customer service messages and asked the models to identify the tone of each message.

The models often correctly identified the tone. However it did not label the tones consistently (especially the 70B model). Thanks to the wonderfully huge number of synonyms we have in the English language, the same phrase could be labelled completely differently each time, without being wrong.

And let’s face it, tone is also quite subjective. In that sense, the model accurately reflected humanity: one person's description of the same phrase could be completely different to another's. Each time, the response could be valid.

So was this tone identification experiment successful? Well, although the labels applied to different tones varied considerably, they were consistent in terms of where you might put them on a positive to negative spectrum. The model might label the same phrase as ‘hostile’, ‘rude’ or ‘uncaring’, but you’d class all of these as being undesirable and something to correct.

I also observed that the model made a distinction between professional and unprofessional tones with reassuring consistency.

EXPERIMENT 4: LINGUISTIC INSTRUCTION

This was a very quick experiment to start to look at whether LLMs can act on simple linguistic instructions.

The application for this would be to help customer support agents stick to a brand's style guide.

For example, would an LLM be able to prompt an agent to reword their sentence from the passive voice to the active voice?

Both models coped well with this task.

CONCLUSIONS

The experiments were carried out over just 2 days so the results are interesting but not robust. More methodical testing needs to be done. With that caveat aside:

1. Overall the LLMs tested did well in rephrasing unprofessional-sounding phrases into more professional ones.

2. Sometimes the rephrased sentences were a bit formal although still an improvement on the worst messages.

3. Results were surprisingly nuanced when it came to instructions around tone.

4. Both models could handle multi-step instructions.

5. Tone labelling was not always consistent. Perhaps this is not surprising given how subjective tone is.

WHAT I'D DO NEXT

These results were interesting, but I’d like to test them more quantitatively. I’d work with a developer to see how we could do this programmatically.

I only tested on two models. I’d be curious to test on others e.g. Bard.

Work on a prototype to look at how we could use this within customer service interfaces.